If you thought the mouse cursor was a solved problem, Google DeepMind just handed it a fresh job description.

Point at a restaurant in a paused travel clip, say “book this,” and — in the demos DeepMind published on May 12 — Gemini understands the frame, the object and the intent. It doesn’t ask you to copy, paste, or write an essay-length prompt. It tries to do the work for you.

The idea, in plain language

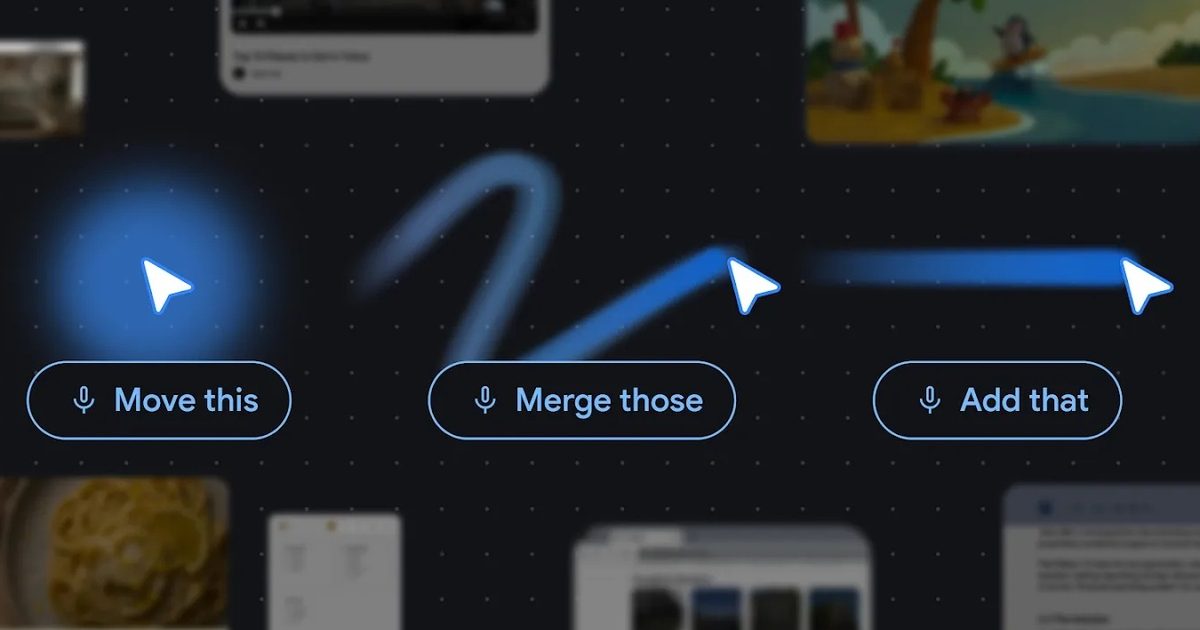

DeepMind’s researchers argue a simple truth: pointing is how humans communicate about the world. We say “this” and gesture; we rarely narrate every detail. Their prototype — called the Magic Pointer in product discussions and described more fully on the DeepMind blog — aims to graft that same shorthand onto screens. Instead of dragging content into a separate AI window, the AI-enabled pointer captures visual and semantic context around wherever you hover, then acts on short spoken or typed commands.

In practice the demos are modest and persuasive: edit a photo by pointing and saying what you want changed, hover over a table and ask for a pie chart, highlight a recipe and ask to double all ingredients. Two interactive demos live in Google AI Studio right now: one for image editing and one for finding places on a map.

How it works (as far as DeepMind shows)

The pointer becomes a multimodal signal: location + pixels + brief natural language. Gemini — the multimodal model DeepMind built into the stack — converts the pixel region into a structured entity (a place, date, object, table cell) and uses that structure to fulfill short commands like “compare these” or “summarize this into bullets.” The team distilled four guiding principles: maintain the flow (no detours to separate AI apps), show-and-tell (capture context visually), embrace shorthand (“Fix this”), and turn pixels into actionable entities.

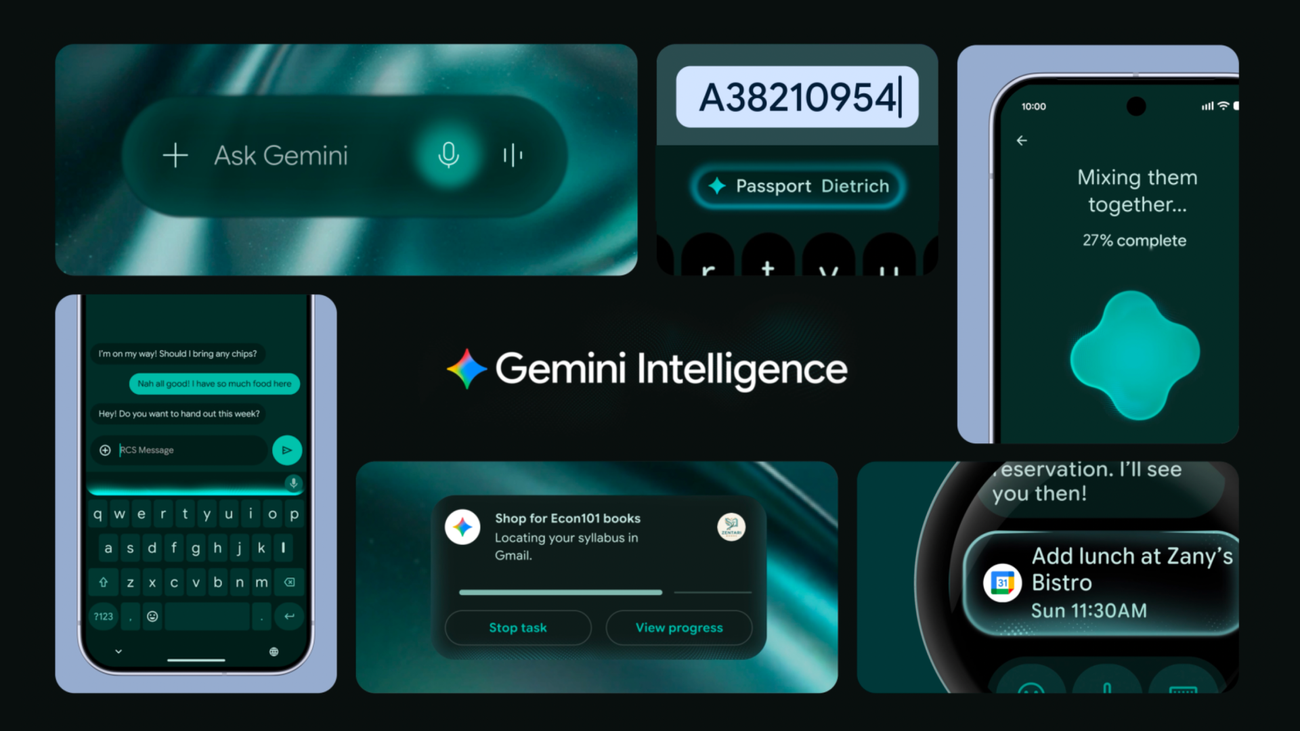

Google is rolling these ideas into several places. Magic Pointer is slated as a headline feature for the new Gemini-first Googlebook laptops, and the pointer-style queries are already being added to Gemini inside Chrome. If you’ve been following Google’s hardware and Gemini strategy, this is a direct extension of that push: see earlier reporting on Googlebook and the company’s Gemini-first laptop plans here. The work also aligns with Google’s broader effort to spread Gemini across Android and other devices, as covered in our look at how Google is embedding Gemini across its platforms here.

Why this matters — and why it could be awkward

There’s real convenience potential. Asking a pointer to convert numbers into a chart or to extract the key takeaways from a PDF could shave minutes — or more likely, mental friction — from routine tasks. It’s also an accessibility win in principle: users who struggle with fine motor controls or long typed prompts could lean on voice and pointing.

But the demos are intentionally experimental. Real-world use exposes thorny problems: how well will gesture-and-speech recognition handle poor lighting, unconventional accents, or crowded webpages? How will the system avoid misinterpreting fleeting mouse movements as intent? DeepMind’s public notes acknowledge those challenges implicitly by emphasizing principles rather than promising immediate perfection.

Privacy is an obvious concern. Some coverage suggests on-device processing as a mitigation; DeepMind hasn’t spelled out deployment details beyond saying the pointer will be woven into Chrome and Googlebook experiences. How much image or audio data stays local versus what goes to cloud models will matter a lot to cautious users and regulators.

Product fit and competition

This isn’t just a novelty feature for shiny new laptops. Even in Chrome, a pointer that understands context could change e-commerce browsing (select a few products and ask Gemini to compare them), research workflows, and content creation. Google is positioning Gemini as the connective tissue across phones, laptops and browsers: Magic Pointer is one piece of that puzzle. If it works well, many tasks people now shove into separate AI chat windows could be handled inline, with less prompting overhead.

Competitors are watching. Gesture and voice control already appear in other ecosystems — from AR headsets to mobile assistants — but DeepMind’s focus on turning pixels into structured entities gives Google a distinctive route: instead of merely tracking where you point, the system tries to “understand” what you meant.

The near term: try it and test it

The experiments are live in Google AI Studio for anyone who wants to poke around. For mainstream users, expect a staged rollout: pointer-powered Gemini features in Chrome first, then deeper integration into Googlebook. If you’re keeping an eye on how Gemini spreads across devices, this is another incremental but meaningful step in that direction, part of Google’s broader Gemini rollout across Android and other surfaces.

The mouse cursor is humble and omnipresent. Giving it smarts is less about spectacle and more about meeting people where they already work — with a finger (or a voice) and a short request. Whether the Magic Pointer becomes a daily habit will depend on the bugs it avoids, the privacy guarantees it keeps, and how well it reads a user’s shorthand.